Distributed Information Retrieval Support Framework

Distributed Information Retrieval (DIR) subsumes functionalities that seek to increase the efficiency and the effectiveness with which unstructured queries are evaluated across multiple collections.

Given a set of such queries and two or more target collections for their evaluation, there are three main functionalities that fall squarely within the scope of DIR:

- collection selection: the identification of the subset of target collections that appear to be the most promising candidates for the evaluation of a given query. The process relies upon goodness criteria and selection criteria: the first are used to rank the target collections from the most promising to the least promising, while the second are used to select collections based on their rank. Depending on the choice of goodness and selection criteria, the process may promote the efficiency or the effectiveness of query evaluation. In the first case, the output of the service is used to limit the cost of query evaluation only to the selected collections. In the second case, the output of the service is used to regulate the number of results retrieved from each collection, and thus to limit the number of irrelevant results that are ultimately presented to the user. The two usage models may well coexist within a single collection selection strategy.

- result fusion: the integration of the partial results obtained by evaluating a given query against (a selection of) the target collections. Typically, the process is one of harmonisation of result scores that have been assigned with respect to different retrieval models and content statistics. Accordingly, the process promotes the effectiveness of query evaluation.

- collection description: the synthesis and maintenance over time of summary information about the content of target collections, from partial inverted indices and language models to result traces for training or past queries. Collection description is only of indirect interest in the context of query evaluation and thus is not exposed by the Master service. Its output forms the basis upon which collections are ranked before being selected for query evaluation. It may also be required to normalise the scores with which the partial results of query evaluation are finally merged.

The DIR Master service defines offers reference implementations of these functionalities.

Contents

Architecture

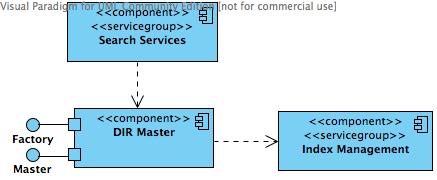

In a gCube infrastructure, the service operates within the context of the search framework. Its role is twofold: as a query pre-processor, it its invoked to perform collection selection prior to query evaluation; as a search operator, it is invoked to merge results after the query has been evaluated across selected target collections. Within the implementation of the service, collection selection and result fusion are local processes. Collection description, however, relies on FullText Index services to localise and maintain statistics about the content of the target collections. The relationships between the Master service and the services that interact with it are illustrated in the following component diagram:

Design

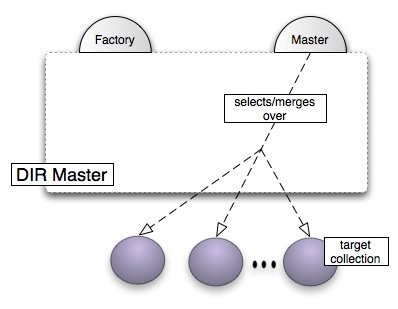

The design of the service is conceptually distributed across two port-types: the Master port-type and the Factory port-type.

Masters

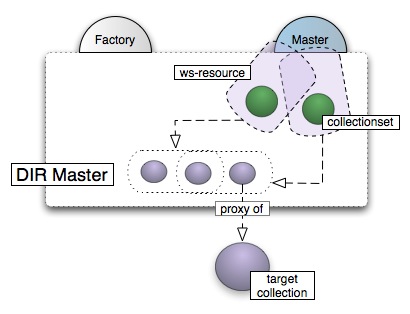

The Master port-type is stateful, in that it maintains summary information about the target collections, both in memory and on the local file system. Such local 'proxies' of the target collections are then grouped in potentially overlapping collection sets that represent the scope of execution for a class of distributed queries. Collections sets are then bound to the port-type interface on a per-request basis, in line with the implied resource pattern of WSRF. In particular, the pairing of the Master interface with collection sets yields WS-Resources referred to as a Masters.

Masters for collection sets of zero or more collections are created in response to client requests to the Factory port-type. After the binding, collections can be added and removed to and from the bound collection sets via operations of the Master interface. For each bound collection, Masters interact with Index services in an attempt to localise information about the content of the collection (interactions are based on best-effort and caching strategies). In the reference implementation, the information forms a term histogram of the collection, i.e. a dictionary of the stems of the most content-bearing words of the collection content, each annotated with their frequency of occurrence within the collection.

The localised information is stored in a cross-collection inverted index, the master index, and made available during selection and fusion. Collection selection is driven by input parameters, primarily a query and a selection criterion to apply over a ranking of the collections produced with respect to the query. In the reference implementation, the query is a bag of keywords and the collection retrieval inference network (CORI) is used for collection ranking [1]. CORI is a collection-level generalisation of the Bayesian Inference Network probabilistic model of retrieval for text documents; in the model, probabilities are based upon statistics that are analogous to term occurrence frequency (tf) and inverse document frequency (idf) in classic document retrieval. In particular, term frequency is replaced with document frequency (df) and inverse document frequency is replaced with inverse collection frequency (icf). Three selection criteria may then be chosen to return the first n target collections in the ranking. In the TopN criterion, n is an absolute constant. In the BestScores criterion, n is derived with respect to a threshold on the relevance score. In the ResultDistribution criterion, n is derived from a distribution of the number of results to be returned to the user.

As to result fusion, this is driven by an input query and two or more references to typically remote resultsets. The reference implementation guarantees a consistent merging of the resultsets by re-computing results scores with exact, non-heuristic techniques. In particular, Masters rely on: (i) global collection content statistics available in the master index, and (ii) term occurrence statistics for each result (such as the number of terms in the corresponding document and the frequency with which the query terms occur in the document). Effectively, the availability of collection-wide and result-wide statistical information allows the service to consistently re-rank the virtual collection comprised of all the documents identified in the result set.

At the time and within the scope of their creation, a selection of the state of Masters is published in the Information System. In WSRF terminology, these are the Resource Properties that identify the target collections and by which Masters can be discovered by clients. The reference implementation publishes only the identifiers of the bound collection set.

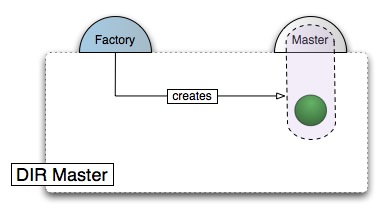

The Factory

The Factory is the point of contact to the Master for clients that wish to create Masters for zero or more target collections, starting from their public identifiers. In this role, it is stateless.

The public interface of the Factory port-type can be found here.

- ↑ J. Callan. Advances in Information Retrieval, chapter: Distributed Information Retrieval, pages 127–150.Kluwer Academic Publishers, 2000.