Legacy applications integration users feedback

In this document, we try to give some clarifications about our (IRD + FAO) current approach in iMarine regarding the use of a common WPS server with legacy applications. The following point of view can be considered as the one of data managers working in marine domain laboratories who would like to deploy processes on a WPS server and use the server as an opportunity to make these processes used by additional people (scientists, statisticians..) with various datasets. However, once deployed, it takes some additional work to make original "raw processes" really used by somebody whatever his skills or the structure of new datasets.

These feedback & guidelines apply regardless of the server location: in or out iMarine infrastructure. Indeed, beyond the iMarine framework, we think that the issues we are currently facing and the related solutions we are currently building remain interesting for other people sharing the same goal.

Contents

- 1 Main objectives of processes (legacy applications) providers

- 2 Analysis of needs for scientists

- 3 Enrichment of Legacy applications: challenges for processes providers (scientists and related IT teams)

- 4 Current blocking points with the WPS Server in the framework of iMarine

- 5 Summary of the guidelines and related schema (current implementation in R package)

- 6 Example of R functions

Main objectives of processes (legacy applications) providers

Our initial goals consists in using the iMarine WPS server to enable some IRD & FAO processes:

- 1) to be described so that users can discover existing processes and get the source code,

- 2) to become more generic in order to be reused with additional applications, people and datasets,

- 3) to be executed on any common WPS server by suggesting some good practices to the scientists. Indeed, raw processes, even on a WPS, can be useless for the community when they are not well described and / or enriched to enable a wider user (as one can expect when made available on a WPS).

- 4) to be deployed by data managers (IRD, FAO...) without having to open & read the source code (by using only metadata: describeProcess.xml).

Analysis of needs for scientists

Here is a summmary of the main needs:

- friendly tools for data processing (same GUIs whatever the programming languages),

- setting up a catalog of processes (through proper metadata),

- enabling a search engine to enable processes discovery,

- enabling each process to become more generic than the original version created for a given data source & related datasets (meaning specific data structures & data formats),

- enabling each process to be executed online, through a WPS server, without having to deal with the underlying programming language.

Indeed, it is key to take into account the heterogeneity of users skills in marine ecology lab, and we think that iMarine WPS will better reach its goal if we solve the following blocking points:

- metadata:

- metadata are required for processes catalogs and discovery

- we want to use the metadata to be able to re-execute an algorithm or indicator ("replicable science") with the same input dataset (when necessary by adding new criteria to filter the input dataset) and some of the outputs to be used as input of other processes, or by applications like the Tabular data Manager, x-Search or Fact sheet generator.

- data formats: enabling the users to provide the input dataset of the process in a friendly data format is crucial (being able to work "as usual" with their favorite format is crucial for some of them): csv, excel, shape files...if not some people won't use it. GML (& WFS) is too exclusive for now. This is possible by using a data abstraction library like ogr (through Rgdal in R).

- access protocols: in addition to data formats, if we want the WPS server to be used with these formats, we think the user should be able to provide the dataset by:

- uploading its dataset with any kind of usual data format (cf previous item). Upload is simple, everybody understands. We want to indicate that upload is possible by using (ad hoc convention) a specific data input parameter in OGC WPS metadata. Upload can use basic http/ftp protocols which is a good option for everybody because 1) it's simple and 2) not all data sources are accessible with some sophisticated acces protocols described below.

- WFS is just another access protocol which is required for a SDI (like WCS, OPeNDAP...), but, for now, ignored by most of the colleagues in our domain who won't be able to deliver the dataset this way. Moreover not all research units have spatial data servers (Mapserver, Geoserver...). Even if some of them have such tools, not all implementations of WFS enable the "outputFormat" option to be used with data formats like CSV, shp....Indeed It seams that the only official OGC format for WFS is GML (others are used as extension of the specification, see http://mapserver.org/fr/ogc/wfs_server.html) and, anyway, none of our colleagues are working with WFS access protocol whatever the underlying data format. Indeed for now, R COST package for DCF data is using mainly CSV files and no real spatial geometry data type,

- data structures: most of users are interested in using the processes set up by their colleagues with other datasets. However processes are usually created to fit specific needs (a specific data structure coming from a specific data source) and with a specific programming language (R, Matlab, Java, IDL..). Codes need to be enriched to become more generic (being on a WPS server is not enough) and be thus executed with similar data structures. Most of the users only know a single programming language and won't be able to modify the code of the original process. This can be fixed by embeding a function enabling the mapping between data structures in the process. Done in R for iMarine.

Indeed, more than delivering as much processes as possible, the blocking points for a community aiming at sharing processes consist in setting up generic methods to solve the main challenges which are listed below:

- challenges for developpers / data managers:

- to enrich the processes of their colleagues so that they can accept additional data formats as inputs (including complex data format like WFS & GML even if very few scientists are using them). This often requires a modification of the native data input reading function (used to create a R object / R data in our case): we fixed this by adding a data format abstraction function using libraries like gdal/ogr. A similar function can be added to deliver multiple formats for the data output writing.

- to enable these processes to be executed with any dataset having a data structure complying with the one expected by the process on the WPS server even if the datasets come from different data sources with a different semantic (and not only datasets from the source the script was created for). This can be achieved by adding a data structure mapping function (e.g. to manage the semantic of labels given to some columns or codelists as well as data types ..). This enables to manage the mapping between the dataset used as input (a priori unknown) and the data structure expected by the process (known). By doing so we aim to enable, for example, FAO datasets to be used as inputs of IRD processes.

- lineage: keep tracks of each execution of a process by writing the metadata describing the inputs, the process itself and the outputs. To create such metadata, we suggest a function writing RDF metadata (by using Jena in R with rrdf package). Off course, according to the needs it could be interesting to do it differently (in Java with OGC metadata). With RDF, the goal is to enable the outputs of the iMarine WPS server to be delivered as Linked Open Data.

- to manage interoperability issues to enable the WPS server to be used within other applications. This is facilitated by connecting the data sources (like databases managed with Postgres / Postgis or netCDF-CF files) and related views / subsets to spatial data servers (like Mapserver, Constellation) implementing proper standards for interoperability between SDIs (for both format and access protocols: GML/WFS, netCDF/OPeNDAP). In this case this has to be done by data managers and this is specific to each data sources and related spatial servers.

- use WPS process for pre-calculation of large sets of indicators (to generate the content of applications like Web sites like Atlas, fact sheets...)

- set up a search engine to enable processes discovery. We suggest to use either OGC or RDF metadata to reuse existing search engines (geonetwork, XSearch..)

- challenges for users:

- find a set of relevant metadata elements for processes which are required to describe and understand them as well as to figure out how to use properly the discovered processes,

- once on the WPS server, it's not obvious that users (colleagues and partners) will be able to execute their processes online. Indeed, as previously said, to facilitate the use of each process by everybody, there is a need to enable various data formats and access protocols to be used in the data inputs. Off course, once previous data format abstraction and data structure mapping functions are in place, WPS metadata and related input parameters have to be written accordingly.

- being able to use the WPS server through friendly clients like Terradue Web Client ([1]), GIS desktop applications...indeed most of the users won't be able to write a proper WPS URL by themselves.

Current blocking points with the WPS Server in the framework of iMarine

Even if we know what we want to do, we currently face a set of issues slowing down our work:

- WPS general issues:

- the different WPS servers implementations (52 North, Geoserver, Geotoolkit....) are not implementing the OGC spefications (WPS, WFS..) in the same way. This is very confusing for users providing their processes,

- with 52 North Server and related Java API: the current WPS Server doesn't enable to write any kind of describeProcess.xml from R codes: Java methods should be enriched to better manage Literal or Complex Input or Ouput paramaters and other WPS metadata elements,

- Regarding the use of WFS as a WPS input data format: there is no discussion about the interest of WFS for data interoperability, it's an obvious need for an infrastructure like iMarine. However, we want to manage both approaches:

- WFS for machines or people who knows about it. However, depending on version, sharing WFS among existing implementations (Geoserver, MapServer, 52°North...) is still a challenge,

- Usual protocols (http or upload) for the others (and the majority),

- iMarine WPS server issues:

- updating the metadata (describeProcess.xml) on the WPS server is currently too complicated:

- either the administrator of the iMarine WPS has to understand the R code to write a Java Class to enable it on the grid / Hadoop. This is not sustainable with complicated processes,

- or we, IRD or FAO, have to deploy it directly and write the Java code on the infrastructure: this is not sustainable and very few organizations will have the required programming skills.

- We are convinced of the interest of having a WPS server in an infractucture like iMarine but the worst scenario would be to spend lot of time collecting and deploying the processes of our partners and to tell them that they can only use them through WFS and GML format but not in the way they are using them usually.

- updating the metadata (describeProcess.xml) on the WPS server is currently too complicated:

- The "describeProcess.xml" of the same process is different wether the process is deployed on a normal WPS server or on an Hadoop server: it should be the same for the users.

At this stage, writing and updating describeProcess.xml should be facilitated.

Questions for the sustainability of this approach:

- who is in charge of deploying the process ? Scientists can't...

- how long should it take to deploy the process when a proper "desribeProcess.xml" is provided ? What are the main steps ? Who is in charge of them ? Could it be automated ?

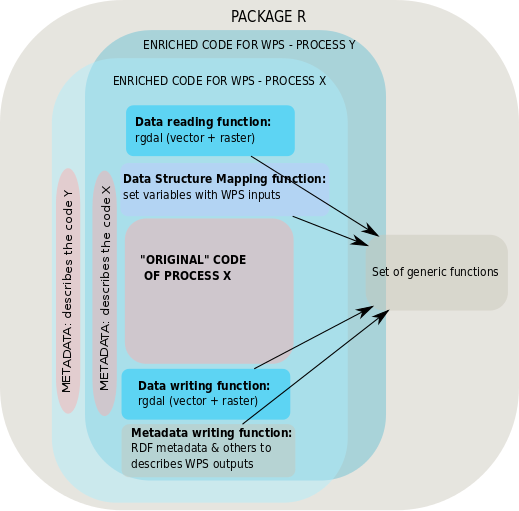

To summarize, according to our discussions, out of technical aspects, the current challenge becomes to get a set of methods (by delivering a set of R functions) facilitating both the deployment of existing processes on a WPS server (using examples of IRD or FAO) as well as their use by a wider community (machines and people):

- abstraction of data format and access protocols: Data reading function & Data writing function,

- abstraction of the data structure: Data Structure Mapping function,

- Lineage (for replicable science): Metadata writing function which describes:

- the execution of the process,

- the output files with relevant metadata (RDF, OGC...) with proper tags (species, fishing gears...).

IRD current R package with related functions is made available online [2]. For now this package focuses on Fisheries activities indicators (with datasets related to Tuna Atlas in the case of IRD). Same approach will be followed with netCDF-CF data sources to enrich biological observations with environmental parameters through a R process.

The following schema illustrates wich functions we suggest to add to enrich each process on a WPS server.

Example of R functions

- SPARQL Query:

FAO2URIFromEcoscope <- function(FAOId) {

if (! require(rrdf)) {

stop("Missing rrdf library")

}

if (missing(FAOId) || is.na(FAOId) || nchar(FAOId) == 0) {

stop("Missing FAOId parameter")

}

sparqlResult <- sparql.remote("http://ecoscopebc.mpl.ird.fr/joseki/ecoscope",

paste("PREFIX ecosystems_def: <http://www.ecoscope.org/ontologies/ecosystems_def/> ",

"SELECT * WHERE { ?uri ecosystems_def:faoId '", FAOId, "'}", sep="")

)

if (length(sparqlResult) > 0) {

return(as.character(sparqlResult[1, "uri"]))

}

return(NA)

}

- Writing RDF statements:

buildRdf <- function(rdf_file_path, rdf_subject, titles=c(), descriptions=c(), subjects=c(), processes=c(), data_output_identifier=c(), start=NA, end=NA, spatial=NA) {

#data_input=c(),

if (! require(rrdf)) {

stop("Missing rrdf library")

}

store = new.rdf(ontology=FALSE)

add.prefix(store,

prefix="resources_def",

namespace="http://www.ecoscope.org/ontologies/resources_def/")

add.prefix(store,

prefix="ical",

namespace="http://www.w3.org/2002/12/cal/ical/")

add.prefix(store,

prefix="dct",

namespace="http://purl.org/dc/terms/")

#type

add.triple(store,

subject=rdf_subject,

predicate="http://www.w3.org/1999/02/22-rdf-syntax-ns#type",

object="http://www.ecoscope.org/ontologies/resources_def/indicator")

#process

add.triple(store,

subject=rdf_subject,

predicate="http://www.ecoscope.org/ontologies/resources_def/usesProcess",

object=processes)

#has_data_input

#add.data.triple(store,

# subject=rdf_subject,

# predicate="http://www.ecoscope.org/ontologies/resources_def/has_data_input",

# data=data_input)

#has_data_input

add.data.triple(store,

subject=rdf_subject,

predicate="http://purl.org/dc/elements/1.1/identifier",

data=data_output_identifier)

#title

for (title.current in titles) {

if (length(title.current) == 2) {

#here we know the language attribute

add.data.triple(store,

subject=rdf_subject,

predicate="http://purl.org/dc/elements/1.1/title",

lang=title.current[1],

data=title.current[2])

} else {

#here we dont know

add.data.triple(store,

subject=rdf_subject,

predicate="http://purl.org/dc/elements/1.1/title",

data=title.current)

}

}

#description

for (description.current in descriptions) {

add.data.triple(store,

subject=rdf_subject,

predicate="http://purl.org/dc/elements/1.1/description",

data=description.current)

}

if (! is.na(start)) {

add.data.triple(store,

subject=rdf_subject,

predicate="http://www.w3.org/2002/12/cal/ical/dtstart",

data=start)

}

if (! is.na(end)) {

add.data.triple(store,

subject=rdf_subject,

predicate="http://www.w3.org/2002/12/cal/ical/dtend",

data=end)

}

for (subject.current in subjects) {

URI <- FAO2URIFromEcoscope(subject.current)

if (! is.na(URI)) {

add.triple(store,

subject=rdf_subject,

predicate="http://purl.org/dc/elements/1.1/subject",

object=URI)

} else {

add.data.triple(store,

subject=rdf_subject,

predicate="http://purl.org/dc/elements/1.1/subject",

data=subject.current)

}

}

if (! is.na(spatial)) {

add.data.triple(store,

subject=rdf_subject,

predicate="http://purl.org/dc/terms/spatial",

data=spatial)

}

save.rdf(store=store, filename=rdf_file_path)

}