Difference between revisions of "File-Based Access"

(→Small Deployment) |

(→Small Deployment) |

||

| Line 95: | Line 95: | ||

In this case, the installation is very simple but it's not guaranteed the failover and data replication, also is not guaranteed the horizontal scalability | In this case, the installation is very simple but it's not guaranteed the failover and data replication, also is not guaranteed the horizontal scalability | ||

Given all this, we just think that single server deployment isn’t the best way to get true durability. We think the right path to durability is replication on many node. That’s why us current deployment is of kind "large deployment" | Given all this, we just think that single server deployment isn’t the best way to get true durability. We think the right path to durability is replication on many node. That’s why us current deployment is of kind "large deployment" | ||

| + | |||

| + | |||

| + | [[Image:smallDeploymentArch.jpeg|frame|center|Small Deployment Architecture]] | ||

Revision as of 15:39, 28 February 2012

Part of the Data Access and Storage Facilities, a cluster of components within the system focus on standards-based and structured access and storage of files of arbitrary size.

This document outlines their design rationale, key features, and high-level architecture, as well as the options for their deployment.

Contents

Overview

Remote access and storage of unstructured bytestreams, or files, can be provided through a standards-based, POSIX-like API which supports the organisation and operations normally associated with local file systems.

The API is provided by a set of components, most noticeably a client library and a service based on a range of site-local back-ends, including MongoDB and Terrastore. The library acts a facade to the service and allows clients to download, upload, remove, add, and list files. Also is possible remove the contents of a remote directory or show the list of object in a remote directory.

Files have owners and owners may allow a range of users to downloaded and share the files Through the use of metadata, the library allows hierarchical organisations of the data against the flat storage provided by the service's back-ends.

Key features

The subsystem comprises the following components:

- structured file storage

- Clients can create folder hierarchies, where folders are encoded as file metadata and do not require direct support in the storage back-end.

- secure file storage

- File access is authenticated against access rights set by file owners, including private, group, and public access rights;

- scalable file storage

- files are stored in chunks and chunks are distributed across clusters of servers based on the workload of individual servers;

- fault-tolerant file storage

- file are asynchronously replicated across servers of clusters for data recovery and redundancy.

Design

Philosophy

Navigating through folders on a remote storage system, having the ability to download and upload files, masking the backend system. This is the main goal of this library The library is thinked for preserving a unified interface that aligns with their generality and encapsulates them from the variety of File Storage Service Backend. The two layer: core and wrapper library permit the use of the library in standalone mode or in the Gcube framework.

Architecture

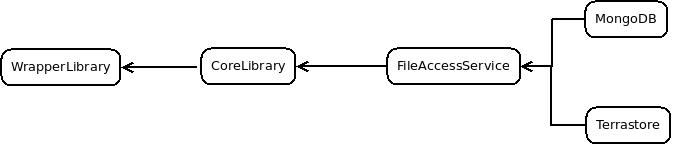

The library is divided in two layer: a core library and a wrapper library. The core library is for generic purpose use, external to gCube framework. The wrapper library is thinked for use internal on gCube framework. The interaction between these two levels permits the use of the library within the framework gCube. The wrapper library interacts with IS for discover server resources that will be used from the core library. The core library interacts with a File Storage Service backend. The file Storage Service has the responsability of data storing. At this time there are 2 kind of file storage service supported: Terrastore and MongoDB.

File based access is provided by the following components:

- Core library

- Implements a high-level facade to the remote APIs of the File Storage Service. The core dialogues directly with a File storage Service that is responsibles for storing data. This level has the responsibility to split files into chunks if the size exceeds a certain threshold, to build the metadata such as: owner, type of object (file or directory), access permissions, etc. .. It also has the task of issuing commands to the File Storage System for the construction of the tree of folders by metadatas if any were needed

- Wrapper library

- Is a wrapper library for gCube framework. This library has the task of capturing the resources made available in the framework Gcube and pass them to the core library

- File Storage Service

- Is a service that have the responsability of remote data storage. This is invoked by the core library and can be based on differents technology like MongoDB, Terrastore.

The following diagram illustrates the dependencies between the components of the subsystem:

Deployment

The deployment of this library has the focus on installing the File Storage System. The File Storage System is installed in a static, not dynamic capabilities based on the load of requests. Therefore it is very important to choose the correct installation according to the needs. As the number of servers dedicated to storage of data, not only increases the storage capacity, but it also improves the balance of the data and therefore the response time. On the other hand, if the storage requirements are few and the number of servers is large, there will be a waste of resources that will be little used

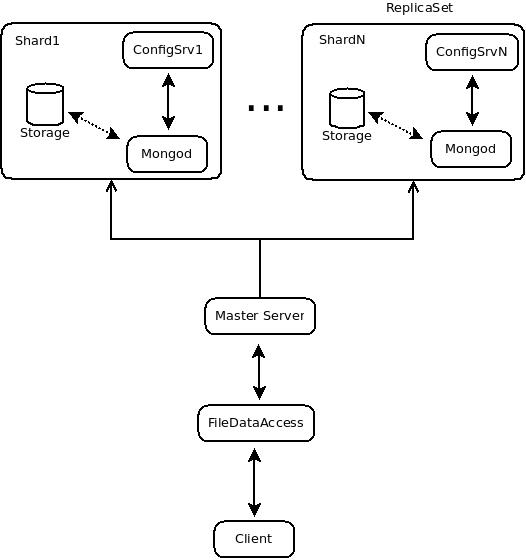

Large Deployment

A large deployment consists of an instalation of a cluster of server dedicated to storage. Our current implementation uses a MongoDB File Storage Service. The servers are organized into MongoDB shards: Each shard consists of one or more servers and stores data using mongod processes (mongod being the core MongoDB database process). In a production situation, each shard will consist of multiple servers to ensure availability and automated failover. The set of servers/mongod process within the shard comprise a replica set.

In MongoDB, sharding is the tool for scaling a system, and replication is the tool for data safety, high high availability, and disaster recovery. The two work in tandem yet are are orthogonal concepts in the design.

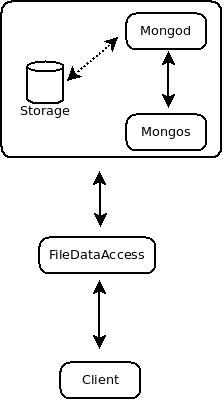

Small Deployment

A small deployment consists of an installation of a single server dedicated to storage. In this case, the installation is very simple but it's not guaranteed the failover and data replication, also is not guaranteed the horizontal scalability Given all this, we just think that single server deployment isn’t the best way to get true durability. We think the right path to durability is replication on many node. That’s why us current deployment is of kind "large deployment"